This is an interactive viewer to accompany our paper Agravat et al. Auditory cortex preferentially tracks speech over music without explicit attention.

In our study, participants from early childhood through late adolescence watched movies containing both speech and music while we recorded brain activity from intracranial electrodes.

We asked this question: are there different areas in the brain that prefer one type of sound over another?

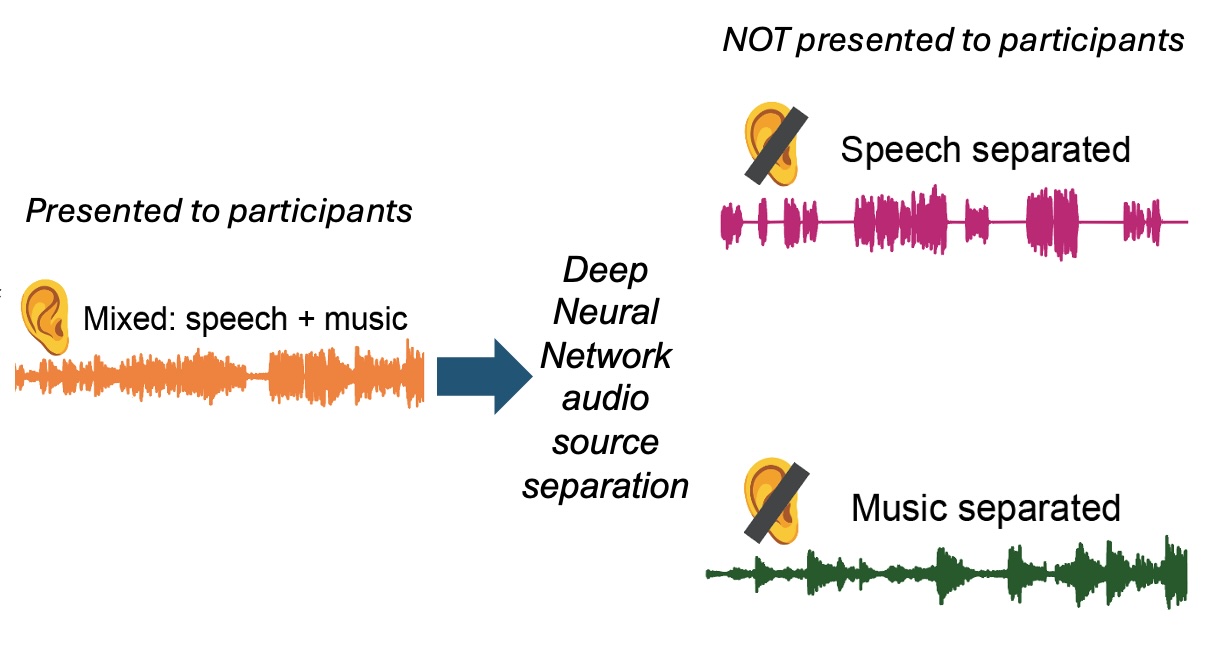

Participants were presented with mixed audio containing both speech and music from movies.

We used deep neural network audio source separation to computationally separate the mixed audio into speech-separated and music-separated tracks. These separated tracks were NOT presented to participants, they only heard the mixed version.

This separation allowed us to test whether auditory cortex preferentially tracks speech or music content, even when participants weren't explicitly attending to either.

Our study includes participants ranging from early childhood through late adolescence. This allows us to examine how speech and music processing in auditory cortex changes across development.

By comparing neural responses across age groups, we can understand whether speech preference in auditory cortex is present early in development or emerges over time.

Click on electrodes to view their neural response properties. You can filter based on Anatomy, which shows different regions of the auditory cortex. For Development you can choose between different age ranges.

When you click on an electrode, you will see:

This electrode in superior temporal gyrus shows preferential tracking of speech over music. The neural response is better predicted by the speech component of the mixed audio, even though participants were not explicitly attending to speech.